The Week AI Platforms Changed: Trust, Multi-Model Reality, and the Outcome Economy

March 14, 2026

The Week AI Platforms Changed

In case you lived under a rock the last few weeks, here are a few things that caught my attention and are worth correlating.

The Reputation Shift

OpenAI took a serious reputational hit when it signed a Department of War agreement after Anthropic publicly refused certain surveillance terms. On February 26, 2026, Anthropic CEO Dario Amodei published a statement describing Anthropic's stance: keep two exclusions (mass domestic surveillance and fully autonomous weapons) and refuse "any lawful use" terms that would remove those safeguards. Amodei was careful to say he supports using AI "to defend the United States and other democracies" and claims Anthropic has already deployed Claude in classified US government contexts. The exclusions are framed as reliability and oversight limits, not anti-defense.

Whether you agree or not, the contrast was clear. The internet noticed.

AP reported that Claude outpaced ChatGPT in U.S. phone app downloads for the first time during the week of March 2-8, 2026, citing Sensor Tower data and explicitly linking it to the Pentagon dispute. That's not sentiment analysis or Twitter vibes. That's measurable distribution impact.

OpenAI published "Our agreement with the Department of War" on February 28, 2026 with three red lines (no mass domestic surveillance, no directing autonomous weapons, no automated high-stakes decisions), then amended it March 2 after backlash to add explicit language about no domestic surveillance including via commercially acquired personal data, and stated intelligence agencies like the NSA would require a new agreement. The fact they changed contract language means the criticism was loud enough to matter.

Meanwhile, the Pentagon designated Anthropic a "supply chain risk" in early March 2026. AP described this as unprecedented, with the Pentagon framing it as needing the military to use tech for "all lawful purposes." Anthropic published a follow-up on March 5 saying they received the letter, will challenge it in court, and argued the designation's scope is narrow.

The takeaway: trust is no longer a safety PDF. It's a growth lever. Anthropic also announced on February 4, 2026 that Claude will remain ad-free because ad incentives conflict with being "unambiguously in our users' interests." That's product positioning, not corporate social responsibility.

Multi-Model Reality Just Became Official

Microsoft quietly killed the "single model winner" narrative.

On March 9-10, 2026, Microsoft published a "Frontier Suite" post stating "Microsoft 365 Copilot is model diverse by design," built for a "heterogenous environment," and "leverages leading models from OpenAI and Anthropic." Claude is now available "in mainline chat in Copilot via the Frontier program, alongside the latest generation of OpenAI models." The same post frames "Intelligence + Trust" as the two essentials.

This isn't a pilot. This is architecture. Copilot Studio (updated January 6, 2026) describes Anthropic models rolling out "alongside OpenAI models" with admin controls for orchestration, chat, and deep reasoning, plus automatic fallback to OpenAI if Anthropic is disabled.

Read that again. Microsoft built automatic failover between foundation models.

Why? Resilience, bargaining power, governance constraints, and workload fit. Even if one model is objectively best, Microsoft's incentive is to keep them swappable. The Copilot Studio post explicitly encourages choosing "the best model for your use case" and even coordinating multiple agents with different "primary models."

For teams building AI workflows: stop betting on "the winner." Your prompt library needs to work across Claude, GPT, and Gemini. Your governance framework needs model-agnostic controls. Your team needs to know when to use which model for what.

The moat shifted from the model to distribution (M365), governance, and data context (Work Graph).

AI Services Are Eating SaaS

Anthropic launched Claude Marketplace in limited preview on March 6-7, 2026. Companies can now "use your existing Anthropic commitment to pay for Claude-powered solutions." Enterprises apply part of an existing spend commitment toward partner solutions. Anthropic consolidates invoicing. Initial partners include GitLab, Harvey, Lovable, Replit, Rogo, and Snowflake.

This changes the procurement game.

Traditional buying: SaaS contract, seat licenses, maybe AI features inside

Marketplace buying: AI commitment, outcome-specific tools, consolidated AI spend pool

Sequoia's "Services: The New Software" piece (March 5, 2026) frames it perfectly: the next trillion-dollar company may be "software masquerading as a services firm." Selling outcomes benefits as models improve. If you sell the tool, you risk becoming a feature. If you sell outcomes, you get cheaper and faster as models improve.

Claude Marketplace is infrastructure for outcome vendors. It's a distribution and billing rail for companies whose product is AI-driven services.

Compare this to OpenAI's Apps in ChatGPT ("Introducing apps in ChatGPT and the new Apps SDK"), which focuses on in-chat actions and consumer workflow integration. Pilot partners include Booking.com, Canva, Coursera, Expedia, Figma, Spotify, and Zillow. Two different plays: OpenAI owns the chat surface, Anthropic wants to own the budget line.

Marketplaces are showing up now because the model layer just got commoditized (Microsoft proved it). Value migrates to distribution, spend control, and governance.

We're Nowhere Near Peak AI

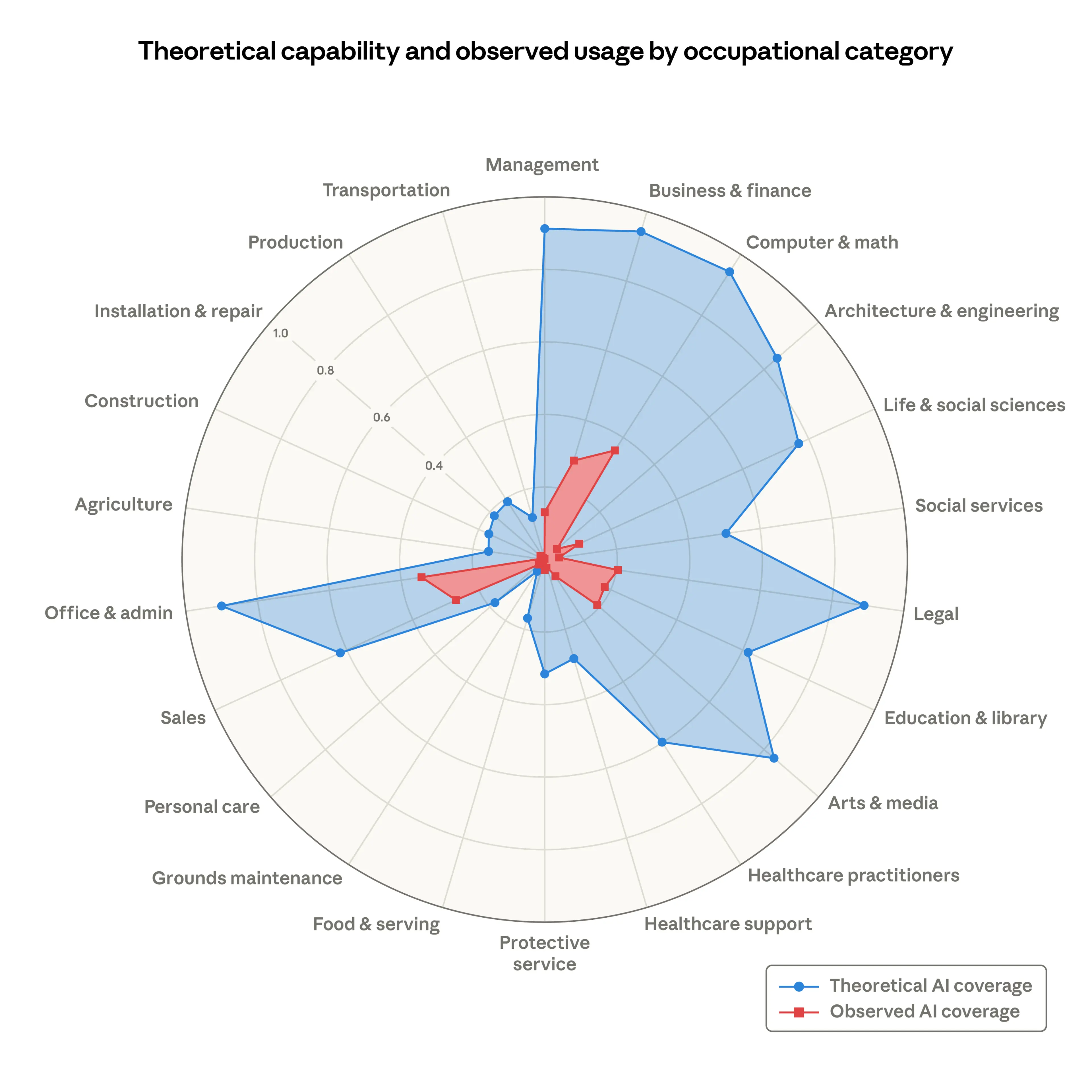

Anthropic's 2025 Economic Index (based on the paper "Which Economic Tasks are Performed with AI?") shows AI usage concentrates in software development and writing tasks (nearly half of all usage). Occupations requiring physical labor are least present: Transportation and Material Moving, Healthcare Support, and Farming, Fishing, and Forestry are explicitly listed as examples.

Only a small slice of occupations show AI use across 75% of their tasks. A larger but still partial slice shows AI use across at least 25% of tasks. Translation: AI is touching many jobs a little, but few jobs a lot.

Meanwhile, "directive" conversations (delegating complete tasks, not just chatting) rose from 27% to 39% in eight months per Anthropic's September 2025 report.

The bottleneck isn't model capability. It's workflow integration and outcome delivery.

Caveat: Anthropic warns the dataset reflects usage on one platform and may overrepresent coding because Claude is advertised as strong at coding. They don't claim it's representative of all AI use. But the directional signal is clear: we're early.

Even software engineering hasn't hit saturation. Food service, logistics, physical industries are barely touched.

The Education Gap Is Getting Worse

Meanwhile, in the Netherlands, enterprise workers get LinkedIn Learning or Coursera access and are told "go learn AI."

I watched a few of those courses. Why does a market analyst need to know what a diffusion model is? The content is so technical, vague, and boring that half the people give up before building anything useful.

Two months ago, AI literacy meant knowing how to prompt ChatGPT. Today it means:

- Understanding when to use GPT vs Claude vs Gemini

- Orchestrating multi-agent workflows

- Building governance frameworks for model diversity

- Evaluating outcome vendors, not just model vendors

Theory-heavy AI 101 courses don't teach this. Teams need hands-on workflow design, multi-model orchestration practice, and governance implementation.

The world is changing fast, but not for everyone.

Curious when companies will start investing real money in hands-on AI education instead of dumping people into theory courses and hoping for the best.

Or maybe we're all waiting until one of those AI-first service companies eats our markets before our teams learn how to build agents.

Frequently Asked Questions

Should we standardize on one AI model or use multiple?

Use multiple. Microsoft just declared Copilot "model diverse by design" with automatic failover between OpenAI and Anthropic. Single-model strategies create vendor lock-in and single points of failure. Build workflows that work across GPT, Claude, and Gemini. Standardize on orchestration patterns, not models.

What's the difference between Claude Marketplace and ChatGPT Apps?

Claude Marketplace is about procurement. Enterprises spend their Anthropic commitment on partner solutions (Harvey, GitLab, Snowflake) with consolidated billing. ChatGPT Apps is about in-chat capabilities (Canva, Spotify, Expedia). One owns the budget line, one owns the chat surface.

Why did Claude beat ChatGPT in downloads during the Pentagon dispute?

AP/Sensor Tower reported Claude outpaced ChatGPT in U.S. app downloads the week of March 2-8, 2026, explicitly linking it to the Pentagon standoff. Trust translated directly to distribution. Consumers voted with downloads.

Are we anywhere near peak AI adoption?

No. Anthropic's Economic Index shows AI concentrates in software and writing (nearly 50% of usage) while Transportation and Material Moving, Healthcare Support, and Farming are barely touched. Only a small slice of occupations use AI across 75% of tasks. Meanwhile, directive delegation rose from 27% to 39% in 8 months (Anthropic's September 2025 report). The peak is years away.

What's the most important AI skill for teams in 2026?

Multi-model orchestration. Knowing when to use GPT for speed, Claude for reasoning, Gemini for search integration. New AI literacy includes model selection criteria, cross-platform prompt design, governance for model diversity, and evaluating outcome vendors. Theory courses don't teach this.

Ready to build multi-model workflows for your team? Join our hands-on workshops where we teach orchestration patterns and outcome delivery, not just prompt engineering.

One call. We'll show you exactly what we'd build with your team.

No pitch decks. No generic proposals. Just a conversation about your workflows and what we can automate together.